On top and around Kubernetes

Kubernetes is a dominant force in the world of container orchestration, and it continues to grow. The ecosystem around Kubernetes has changed dramatically over the past few years,

Introduction

Kubernetes is a dominant force in the world of container orchestration, and it continues to grow. The ecosystem around Kubernetes has changed dramatically over the past few years, and this article will help you understand how these technologies are all connected.

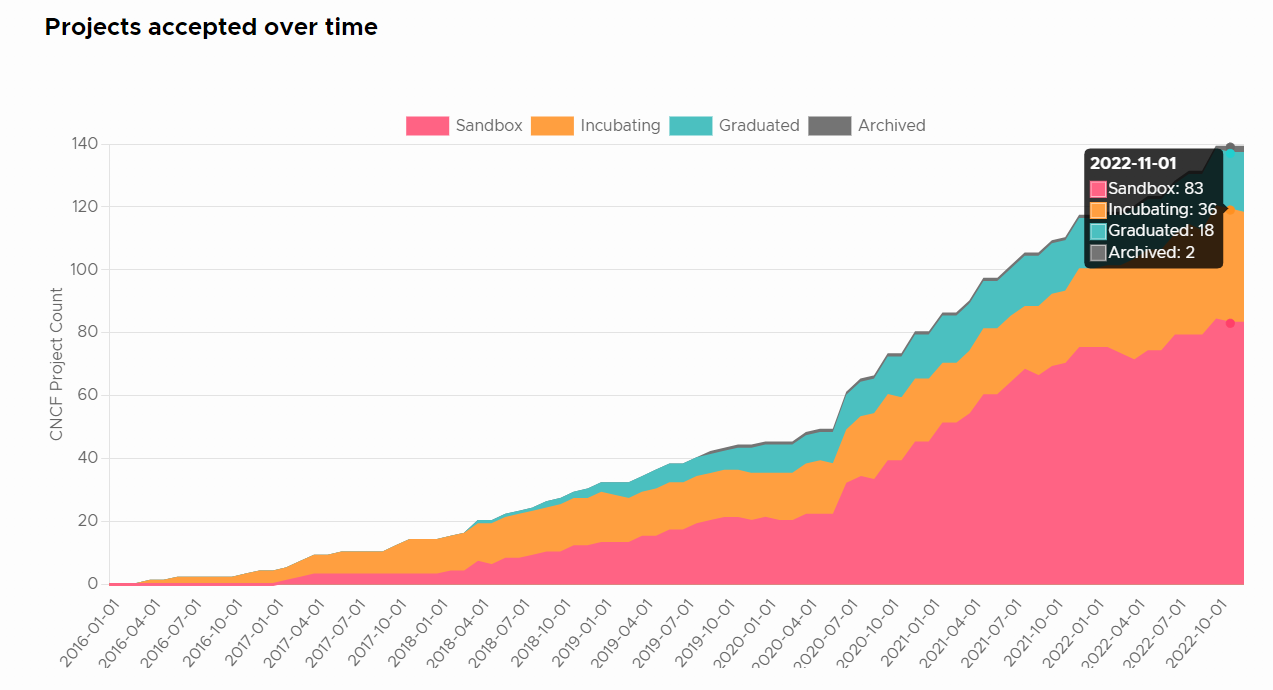

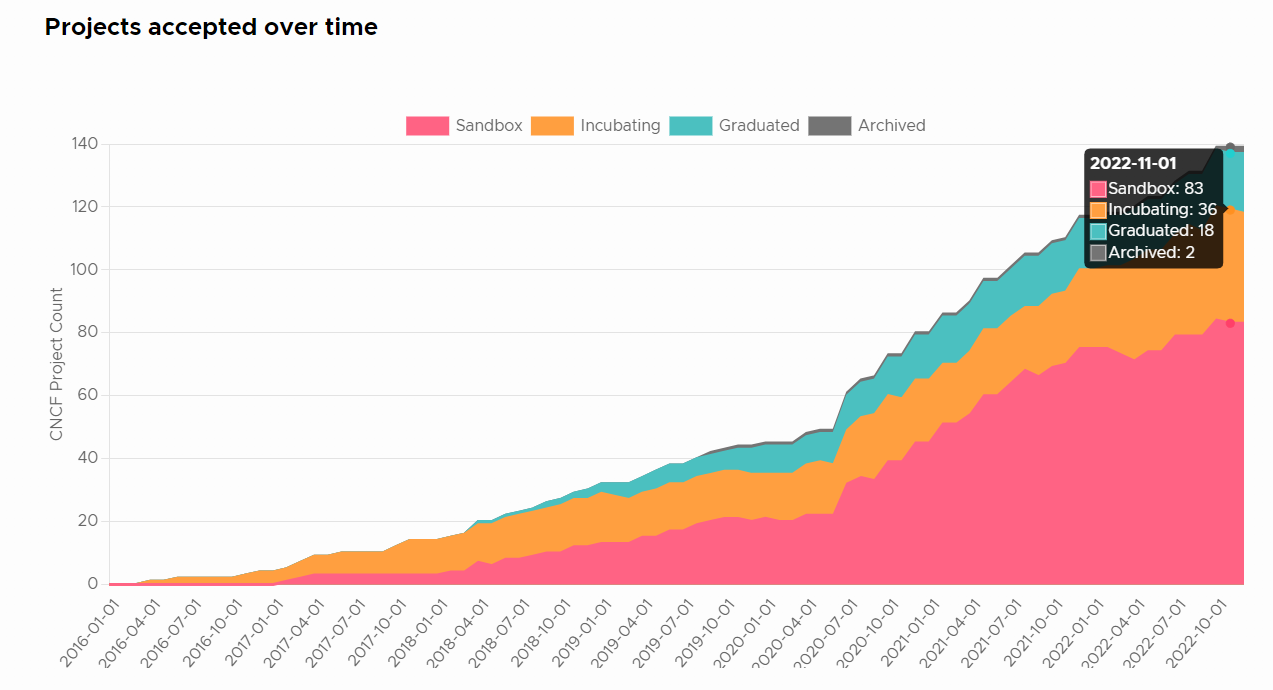

Cloud Native Accepted Projects:

Image Source: Cloud Native Computing Foundation

More and more Independent Software Vendors (ISV) are onboarding cloud native solutions on top and around the Kubernetes ecosystem to help customers leverage the power of Kubernetes, with 141 projects accepted by CNFC as of now (Dec/2022). The key point is that these ISVs are not directly competing with each other because they all add value in different ways. This is a big deal, and it has implications for the future of Kubernetes as a whole. Let’s take a closer look at how these technologies are all connected, what they do, and why they matter.

Containers

Containers are the foundation of Kubernetes, containers can be used for many different types of applications and they're also what makes it possible for you to run applications on top of Kubernetes.

Containers are lightweight, portable, and highly resource-efficient, they allow you to package all of your dependencies into one lightweight unit that can easily be run anywhere. They can be started, stopped, and moved around easily, also have many other benefits, including security, portability, and speed.

While containers have been around for a while now, they continue to evolve in terms of features and capabilities.

The most popular container runtime options are Docker, rkt, and CRI-O. Docker is the most widely used container runtime, but rkt and CRI-O are gaining popularity due to their lightweight nature and support for Kubernetes.

Runtime - Container Runtime(15)

Orchestration

The most complex concept in Kubernetes is the concept of container orchestration. This involves managing the lifecycle of containers, scheduling them on nodes, and managing the communication between containers. Not only that, but it also involves managing the resources of the cluster, such as CPU, memory, and storage.

Container orchestration is the process of automating containers' deployment, scaling, and management in a distributed system. It involves scheduling containers on nodes, managing the communication between containers, and managing the resources of the cluster.

Kubernetes was an early player in the container orchestration space with its 1.0 release coming out in 2014. Since then it has continued to expand its feature set, adding things like rolling updates and service health checks along with native support for things like metrics collection and telemetry data export via Prometheus.

Kubernetes isn’t alone in terms of functionality either - many other container orchestrators exist today which offer different ways to handle certain aspects of application deployment or management such as auto-scaling based on load patterns detected within a cluster or handling stateful applications like databases by using storage services provided by external providers such as Google Cloud Platform (GCP), AWS EBS volumes or Azure Disk Storage Service.

The most popular options are Vanilla Kubernetes, which is the open-source version of Kubernetes without any additional features, but container orchestration, service discovery, and autoscaling. OpenShift is a platform based on Kubernetes that provides additional features such as a web console, integrated CI/CD, and support for multiple languages. Rancher is a platform that provides additional features such as a web console, integrated CI/CD, and support for multiple languages. K3s is a lightweight version of Kubernetes designed for edge computing and IoT applications.

Platform - Certified Kubernetes - Distribution(65)

Click to expand the list of Kubernetes Distribution!

- Accordion

- AgoraKube

- AIStation

- Alauda Container Platform (ACP)

- AlviStack Ansible Collection for Kubernetes

- Amazon Elastic Kubernetes Service Distro (Amazon EKS-D)

- ASUS Cloud Infra

- Azure (AKS) Engine

- BizKube

- BoCloud BeyondContainer

- Canonical Charmed Kubernetes

- CARS TaiChu

- China Mobile CMIT PaaS

- China-ASEAN Information Harbor Container Cloud

- Cloudboostr

- Cocktail Cloud

- Constellation

- D2iQ Konvoy

- DaoCloud Enterprise

- Desktop Kubernetes

- Diamanti Spektra

- EasyStack Kubernetes Service (EKS)

- Elastisys Compliant Kubernetes

- Ericsson Cloud Container Distribution

- Flant Deckhouse

- Flexkube

- Fury Distribution

- Giant Swarm Managed Kubernetes

- GienTech Kubernetes Platform (KubeGien)

- H3C CloudOS

- HarmonyCloud Container Platform

- HPE Ezmeral Runtime Enterprise

- Inspur AIStation Training Platform

- Intel Smart Edge Open

- JD.com JDOS

- k0s

- k3s

- K8splus

- Kamaji

- KubeCube

- Kubermatic Kubernetes Platform

- Kubesphere

- Kublr

- kURL

- Linklogis Kubernetes Platform

- MetalK8s

- MicroK8s

- Mirantis Kubernetes Engine

- Netease Qingzhou Microservice

- Oracle Cloud Native Environment

- Paas-TA Container Platform

- Palette

- Platform9 Managed Kubernetes

- QBO

- Rancher Kubernetes

- Red Hat OpenShift

- RKE Government

- Robin CNP

- TenxCloud Container Enterprise

- Typhoon

- vcluster

- VMware Tanzu Kubernetes Grid

- VMware Tanzu Kubernetes Grid Integrated Edition

- Whitestack WhiteMist

- Wind River Studio Cloud Platform

Platform - Certified Kubernetes - Hosted(52)

Click to expand the list of Kubernetes Hosted Options!

- Alibaba Cloud Container Service for Kubernetes

- Amazon Elastic Container Service for Kubernetes (EKS)

- Azure Kubernetes Service (AKS)

- Baidu Cloud Container Engine

- Bizmicro

- Catalyst Kubernetes Service

- China Mobile KCS

- Chinaunicom Kubernetes Engine

- Civo Kubernetes

- CloudLink

- Conoa Proact Managed Container Platform

- DigitalOcean Kubernetes

- eBaoCloud

- ELASTX Private Kubernetes

- Exoscale

- FUJITSU Hybrid IT Service Digital Application Platform Kubernetes Service Hatoba

- German Edge Cloud Kubernetes Services (GKS)

- Google Kubernetes Engine (GKE)

- Huawei Cloud Container Engine (CCE)

- IBM Cloud Kubernetes Service

- InCloud OS

- Inspur Cloud Container Engine and Inspur Open Platform

- Inspur Open Platform for ARM

- InsureMO

- IXcloud Kubernetes Service

- JD Cloud JCS for Kubernetes

- KubeSphere®️ (QKE)

- Linode Kubernetes Engine

- Mail.Ru Cloud Containers

- Microsoft AKS Engine for Azure Stack

- Nexastack Managed Kubernetes

- NHN Kubernetes Service (NKS)

- NIFCLOUD Kubernetes Service Hatoba

- Nirmata Managed Kubernetes

- Nutanix Karbon

- Oracle Container Engine

- Orka

- OVH Managed Kubernetes Service

- Rafay

- Red Hat OpenShift Dedicated

- Red Hat OpenShift on IBM Cloud

- Ridge Kubernetes Service

- Samsung Cloud Platform - SCP Kubernetes Engine (SKE)

- SAP Certified Gardener

- Scaleway Kubernetes Kapsule

- STACKIT Kubernetes Engine

- SysEleven MetaKube

- Taikun

- Tencent Kubernetes Engine (TKE)

- Vultr Kubernetes Engine

- ZStack Edge

- ZTE TECS OpenPalette

Storage

Many organizations have struggled with storage for containerized applications long before Kubernetes came along. But Kubernetes has made this a whole new problem to contend with because it introduces so many new concepts and abstractions. The storage abstraction in Kubernetes is called Persistent Volume Claims (PVCs), which allow you to request persistent storage from the cluster. There are many different options for providing persistent volumes, but the most common is using NFS or GlusterFS for your stateful workloads, and Ceph/RBD for object stores like Amazon S3 or Minio.

The most popular open-source options for cloud-native storage for Kubernetes are Ceph, GlusterFS, and Portworx. Ceph is a distributed object storage system, GlusterFS is a distributed file system, and Portworx is a distributed block storage system.

There’s been a rapid evolution of what’s possible with storage on top of Kubernetes: Gluster just announced support for KVM-based clusters; NFSv4 has some interesting new features that weren't available until recently; Ceph has added support for Intel Optane SSDs; etcetera! This means there are many options now available that didn't exist 2 or 3 years ago! The ecosystem is growing exponentially, and as a result, the divide between what’s possible with on-premises storage and what’s available in the cloud is rapidly narrowing. This makes it easier than ever before to build solutions that can run both on-premises and in the cloud.

Runtime - Cloud Native Storage(66)

Click to expand Cloud Storage list!

- Alibaba Cloud File Storage

- Alibaba Cloud File Storage CPFS

- Alluxio

- Amazon Elastic Block Store (EBS)

- Arrikto

- Azure Disk Storage

- Carina

- Ceph

- CloudCasa by Catalogic Software

- Commvault

- Container Storage Interface (CSI)

- CubeFS

- Curve

- DataCore Bolt

- Datera

- Dell EMC

- Diamanti

- DriveScale

- Gluster

- Google Persistent Disk

- Hitachi

- HPE Storage

- Huawei

- HwameiStor

- IBM Storage

- INFINIDAT

- Inspur Storage

- IOMesh

- Ionir

- JuiceFS

- K8up

- Kasten

- LINSTOR

- Longhorn

- MinIO

- MooseFS

- NetApp

- Nutanix Objects

- Ondat

- OpenEBS

- OpenIO

- ORAS

- Pengyun Network

- Piraeus Datastore

- Portworx

- Pure Storage

- QingStor

- Qumulo

- Quobyte

- Robin Systems

- Rook

- SandStone

- Sangfor EDS

- ScaleFlash

- Scality RING

- Soda Foundation

- Stash by AppsCode

- StorPool

- Swift

- Trilio

- Triton Object Storage

- Velero

- Vineyard

- XSKY

- YRCloudFile

- Zenko

Management

Kubernetes is a great platform, but it needs a lot of management. You can’t just turn it on and expect it to work. You need to manage the configuration, deployment, upgrades, monitoring, alerting, and many other tasks. This requires a significant amount of time and effort that many organizations don’t have or want to spend on their infrastructure.

The problem is that Kubernetes can be difficult to manage. It’s not an easy task to scale up a cluster, migrate workloads between clusters, or perform other tasks that sysadmins need in order to keep their infrastructure running smoothly. As a result, many companies are looking for ways to simplify the management of their Kubernetes clusters. There are many tools and services, like kOps and Heptio Ark, that make it easier to deploy and manage Kubernetes clusters. But there are still a lot of moving parts, which means you need someone on staff who is familiar with how they all fit together.

Kubernetes provides an API server that you can use to create clusters, deploy applications to those clusters, and manage their lifecycle. This is a powerful tool for managing your infrastructure, but it can also be intimidating for those who aren’t familiar with how it works. The Kubernetes API server provides a layer of abstraction between you and the underlying resources that make up your cluster. As a result, you don’t need to know what components are running behind the scenes in order to get things done—you just need to know how they work together. But the API server is mainly intended for developers and system integrators. It doesn’t provide a user-friendly interface, and it’s not designed to meet the needs of people who want to deploy, manage, and scale their Kubernetes clusters quickly. The API server is the easiest way to manage your cluster. You can use it to deploy applications, add or remove nodes from a cluster, and perform other tasks that sysadmins need in order to keep their infrastructure running smoothly. As a result, many companies are looking for ways to simplify the management of their Kubernetes clusters.

Orchestration & Management - Scheduling & Orchestration(22)

Click to expand Orchestration and Management List!

Networking

Kubernetes has a variety of networking solutions that you can use to connect your applications. The choice of which one to use depends on your use case and environment, but generally speaking, most users should be able to get started with either Flannel or Calico.

Flannel is a software-defined networking (SDN) solution for Kubernetes that lets you create virtual networks that span multiple hosts, runs on each host and provides an overlay network for the containers. Flannel uses the host’s Linux bridge to create network interfaces, which allows it to be used with any type of container runtime.

Calico is an SDN solution for Kubernetes that provides network policy enforcement and secure connectivity between your containers without requiring additional hardware or software. Calico is a distributed overlay that creates a virtual network across your cluster. It provides an additional layer of security by isolating workloads and their associated IP addresses, so they can only communicate with the resources they need to communicate with. This means that any container running on Calico will not be able to communicate with any other container unless it has been explicitly permitted to do so.

Kubernetes networking solutions provide:

-

A subnet per node

-

An address per pod

If you are planning on running Kubernetes in production at scale and want low-level control over your network, then I recommend using Calico because it offers many features that Flannel does not have by default. You could also opt for something like Weave Net if you just want a simple way to deploy Kubernetes without worrying about installing anything new on the cluster nodes themselves.

Runtime - Cloud Native Network(25)

Click to expand Networking List!

Observability

A lot of work has been done to make Kubernetes observability easier. The most common tools used for monitoring and logging are:

-

Elasticsearch, Kibana, Grafana + Prometheus (ELK stack)

-

alerting and anomaly detection: Prometheus Alertmanager + Prometheus Operator - this is the recommended solution by Google.

-

visualization: Grafana Dashboards and alerts - these can be customized using the same toolset as ELK.

The main metrics you need to monitor about your workloads are requests per second, latency, incoming throughput for each route/endpoint exposed by your service/pod(s), and disk usage, to name a few.

Observability and Analysis - Monitoring(81)

Observability and Analysis - Logging(21)

Observability and Analysis - Tracing(17)

Kubernetes continues to get new adoption, and that brings with it new technologies.

As Kubernetes continues to get new adoption, that brings with it new technologies. There are always new applications and technologies that solve different problems. With Kubernetes becoming more mainstream, there will be more opportunities for developers to build new services on top of it. It’s exciting to see how these technologies can evolve over time.

Conclusion

As we’ve seen, many new technologies are being developed that are making Kubernetes more powerful and easier to use. We can expect this trend to continue as more developers become interested in container management and look for ways to introduce these tools into their workflows. If you’re a developer looking to get started with Kubernetes, there are many resources available to help you. You can find more information on the official Kubernetes website or on GitHub.

Do you want to get the latest updates? Subscribe to our newsletter.

Are you a new engineer just getting started with Kubernetes? The best way to learn Kubernetes is by doing and K1s, is a Kubernetes Serverless cluster ready to go, you don't need to install a cluster to start learning. We are open for early access, no card is needed, take a look now!